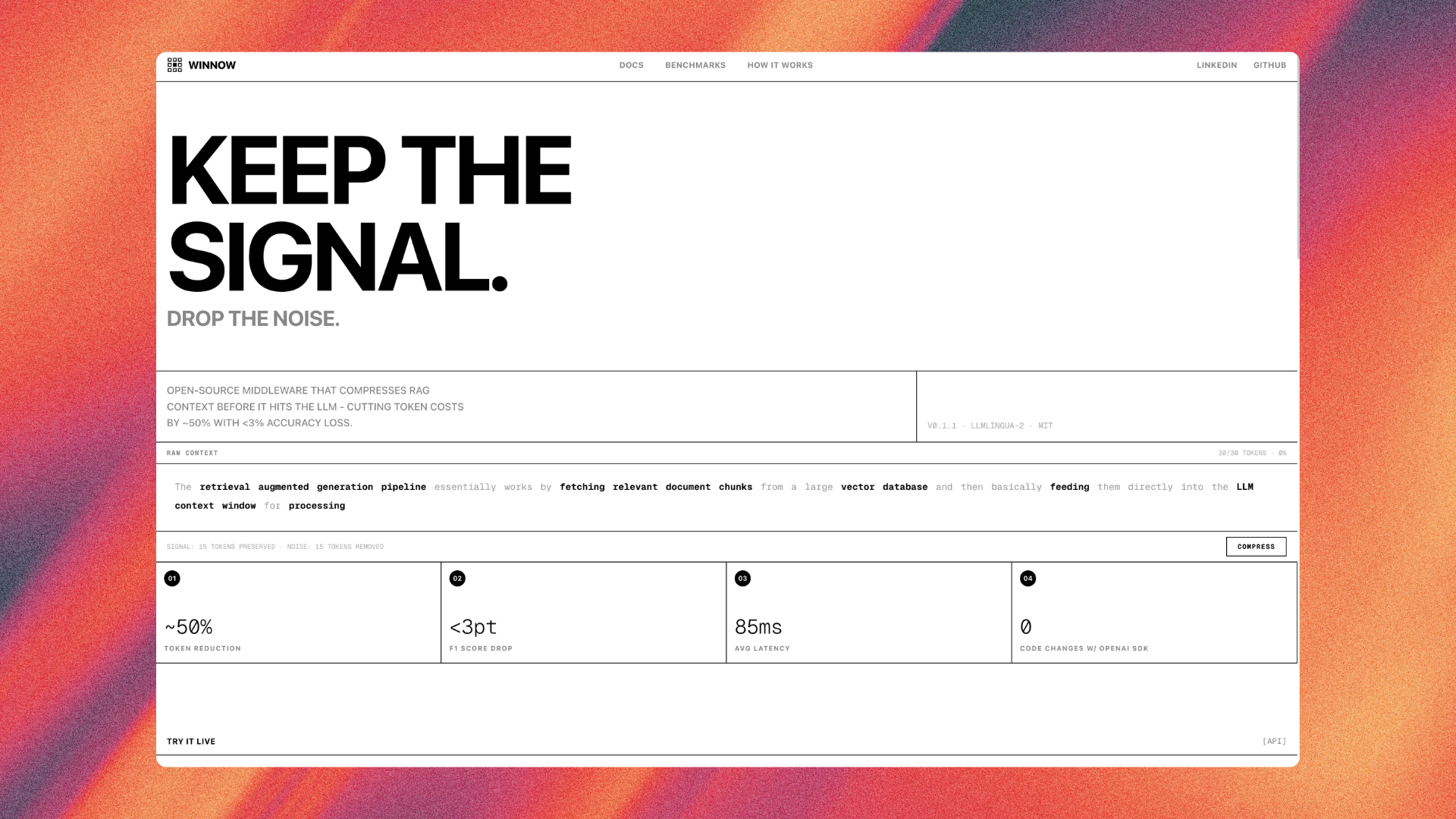

Winnow

Keep the signal. Drop the noise.

Winnow compresses RAG prompts before they hit your LLM, cutting token costs 50%+ while preserving meaning. Uses question-guided filtering + LLMLingua-2 for semantic accuracy. Key features: • FastAPI server with OpenAI-compatible proxy • Batch compression API • Question-aware filtering keeps answer-relevant tokens • Docker self-hosting, pip-installable SDK • MIT licensed

“what does not kill me makes me stronger”

Reviews (0)

No reviews yet

Be the first to predict the death of this product!