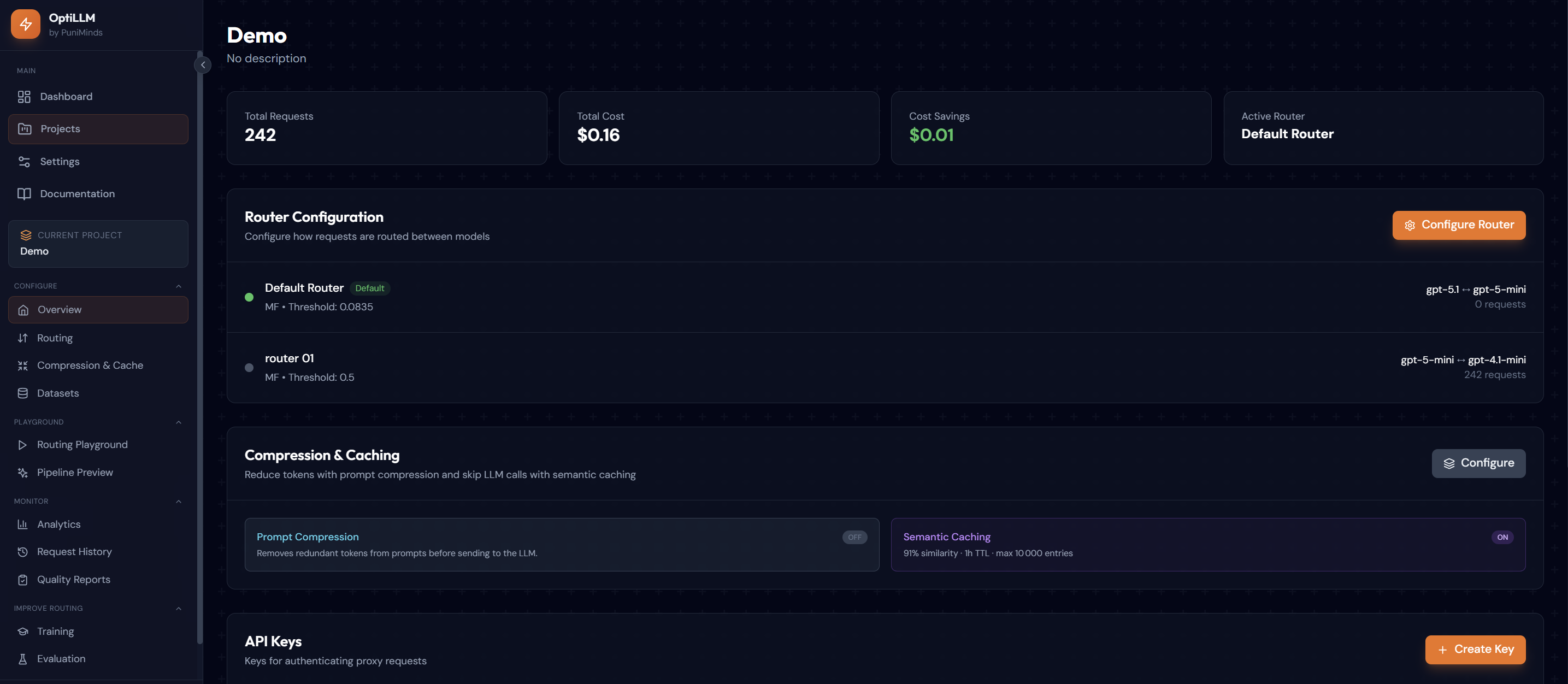

OptiLLM

Intelligent LLM Cost Optimization Platform

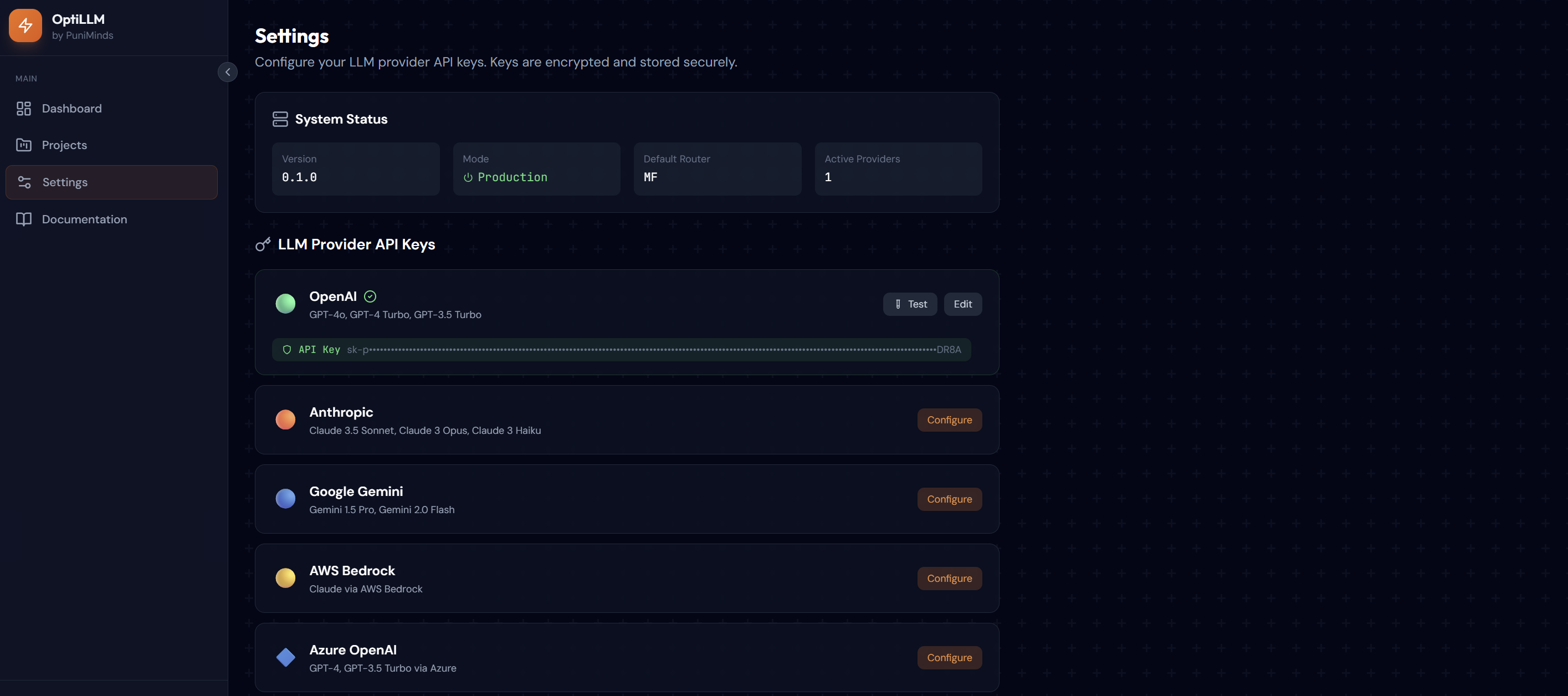

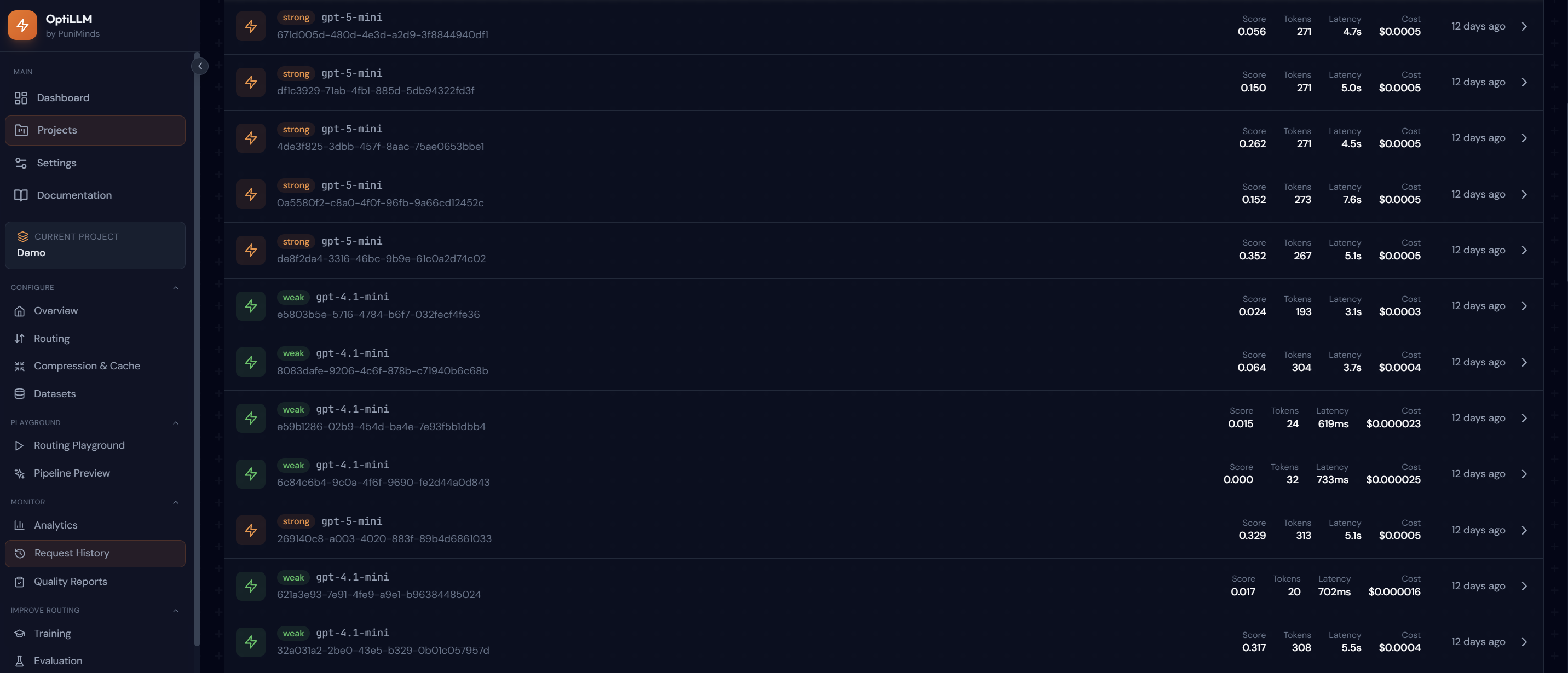

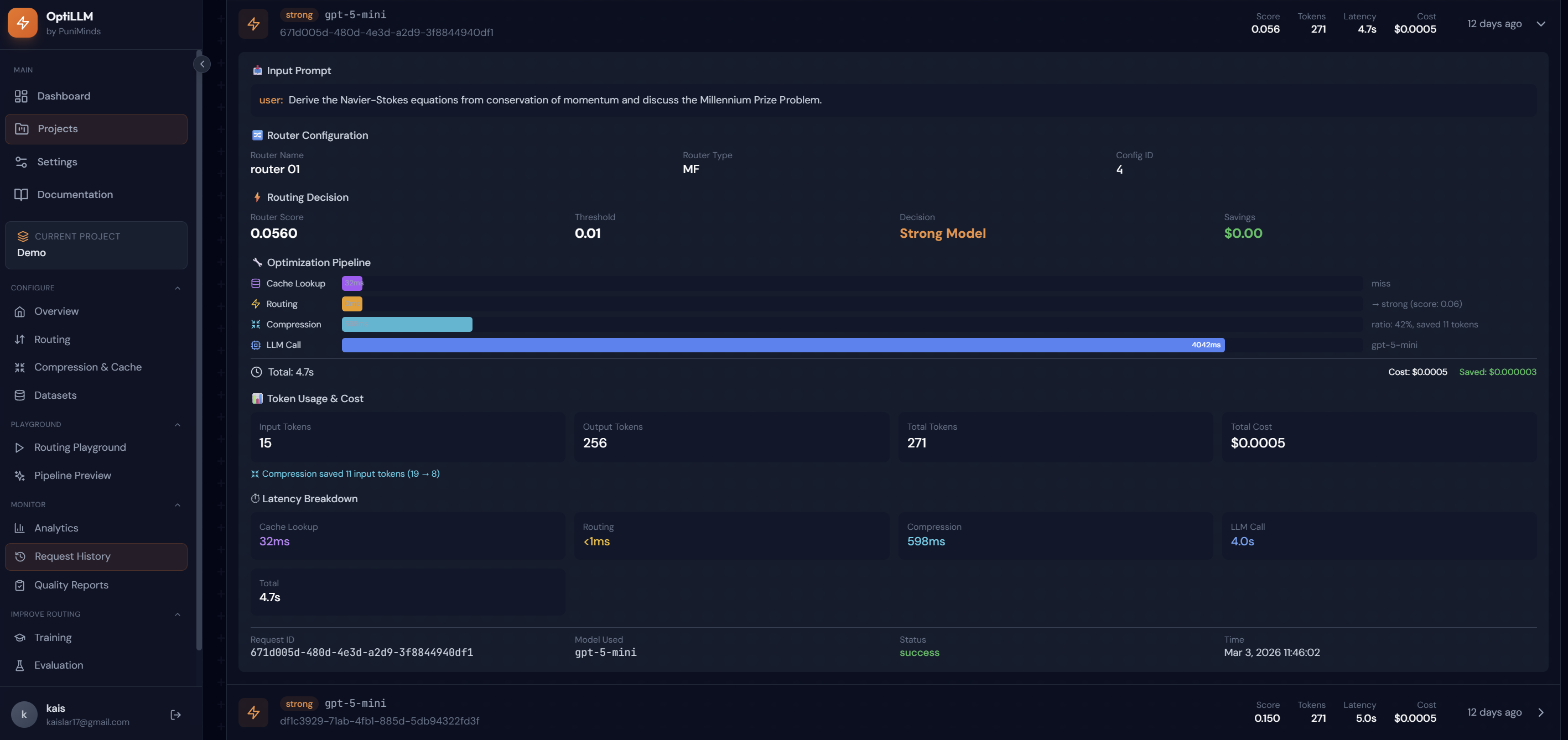

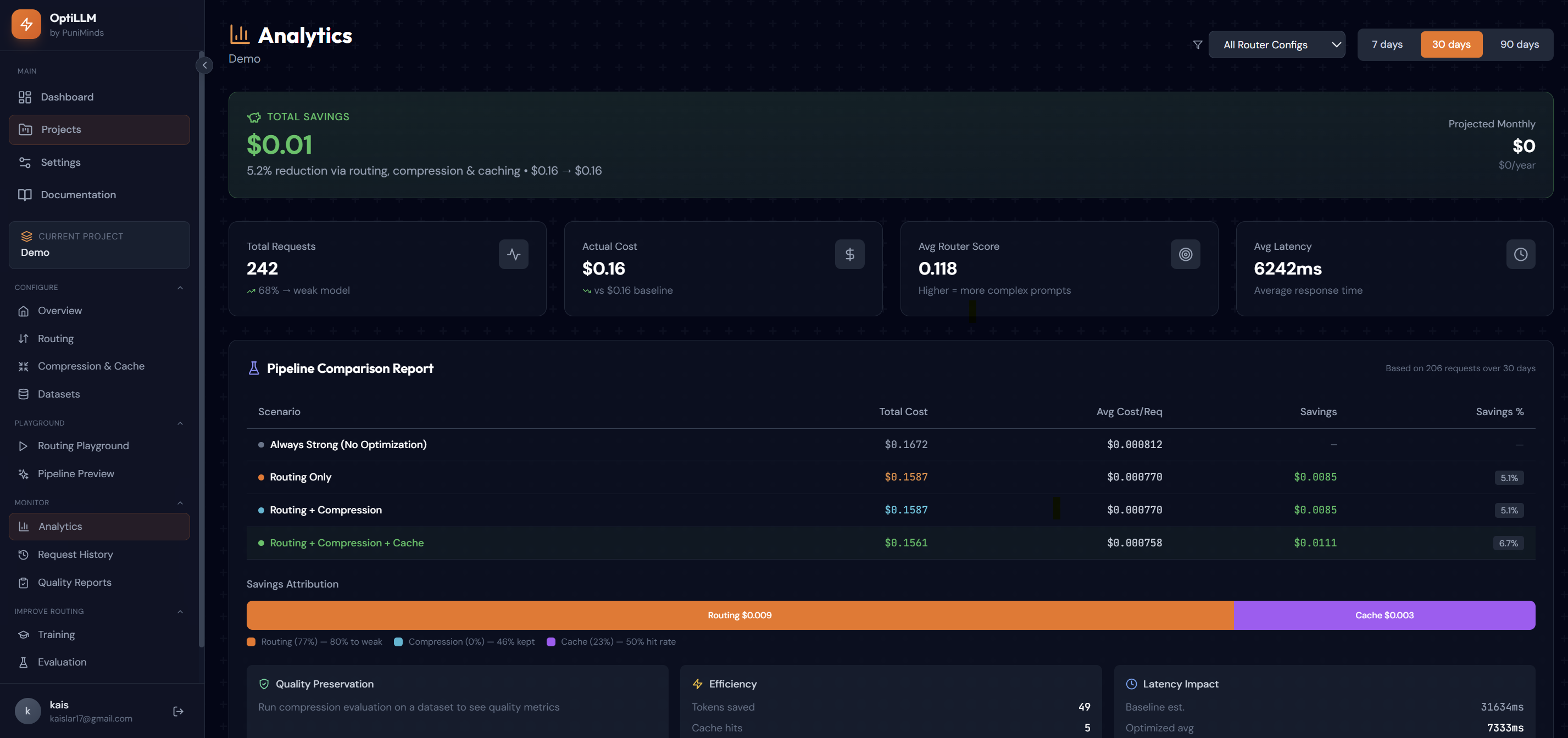

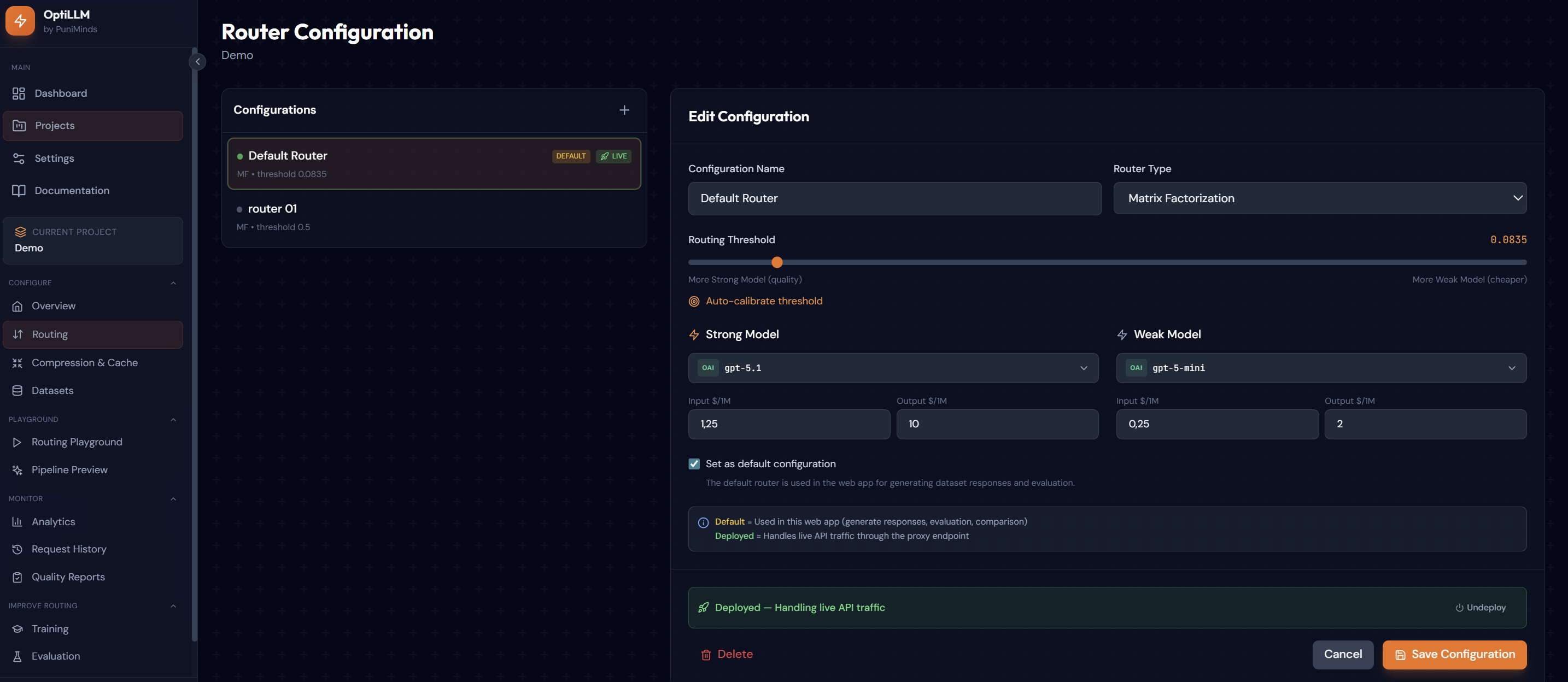

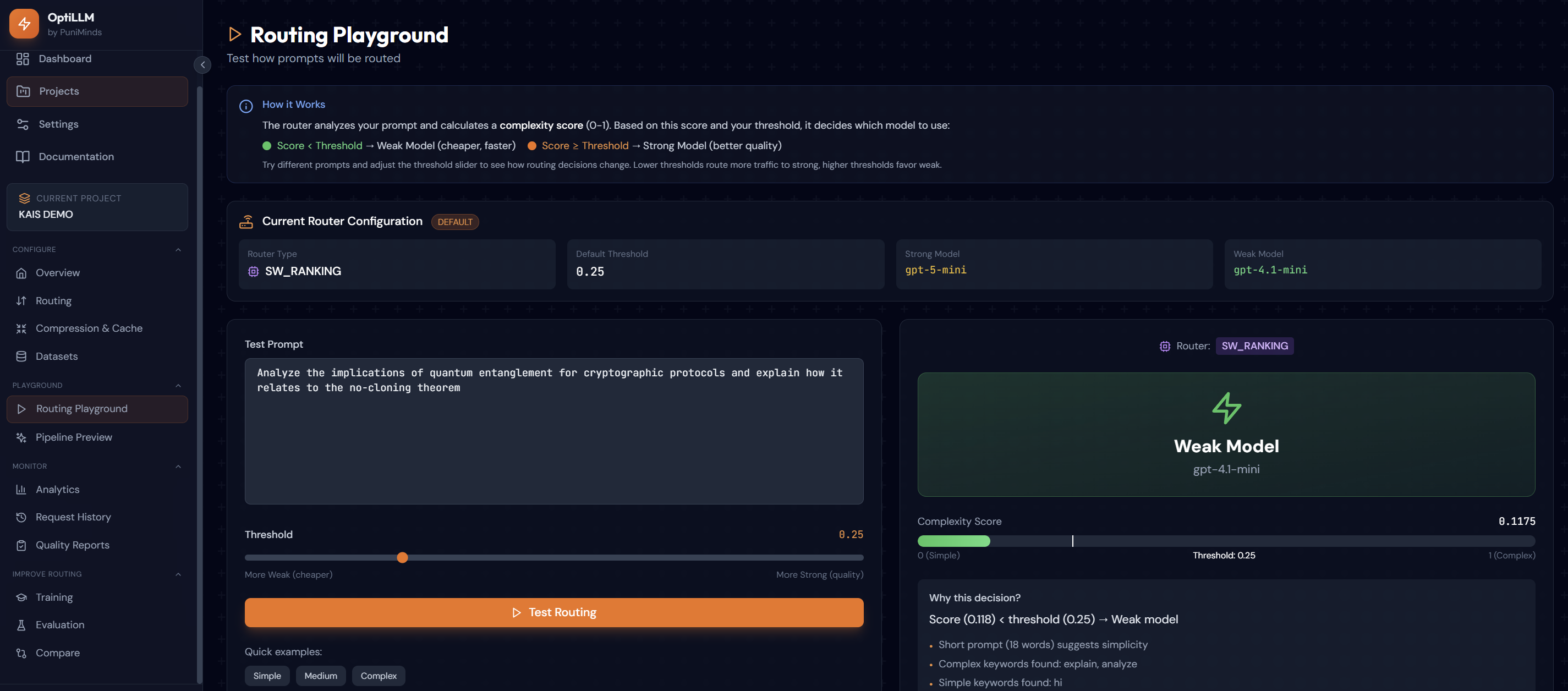

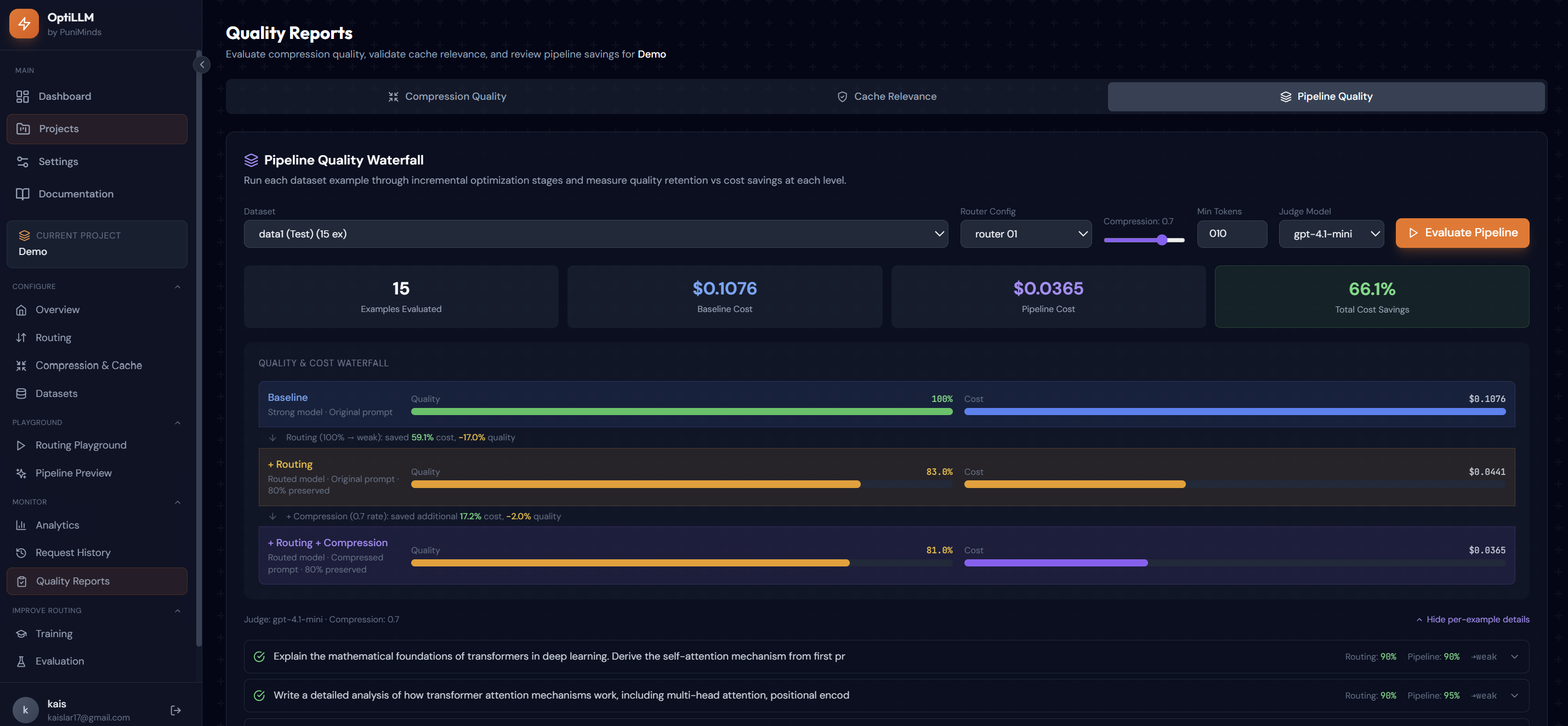

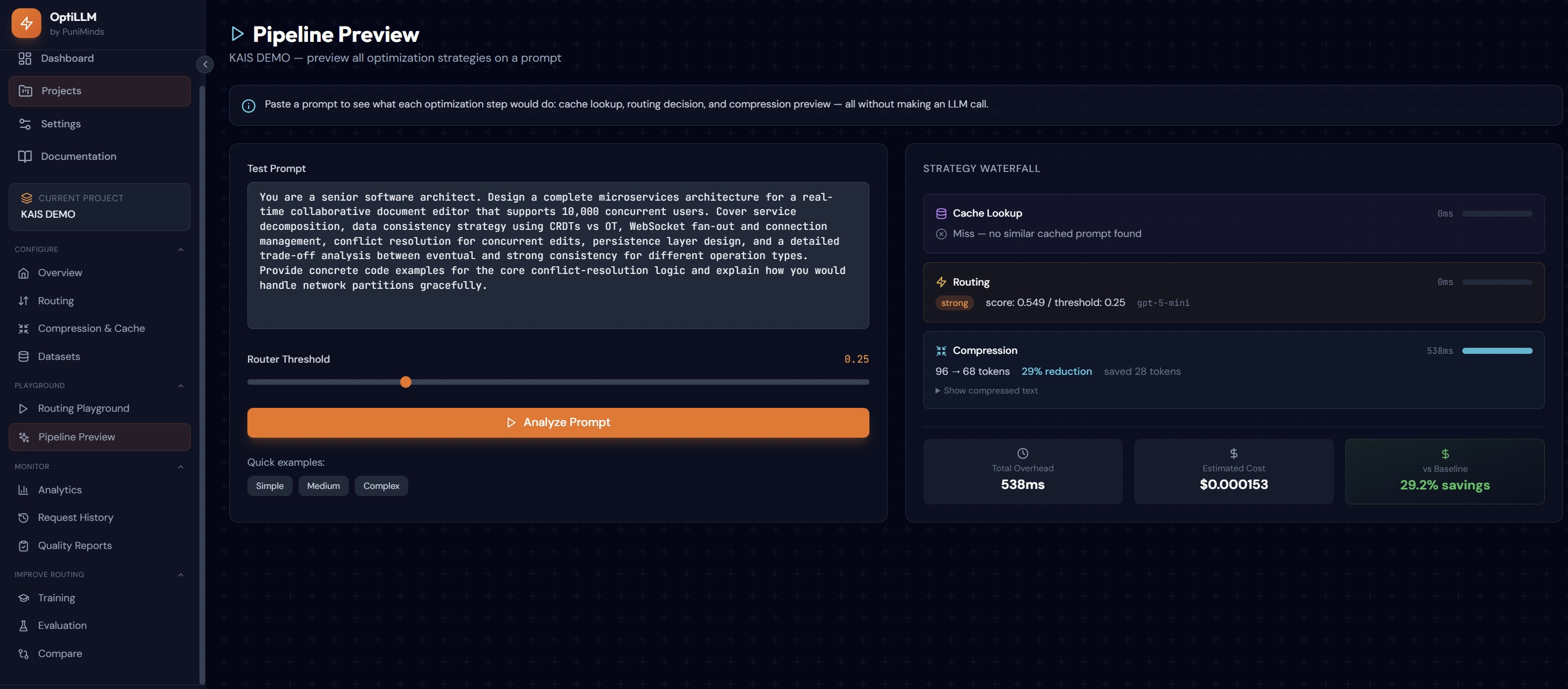

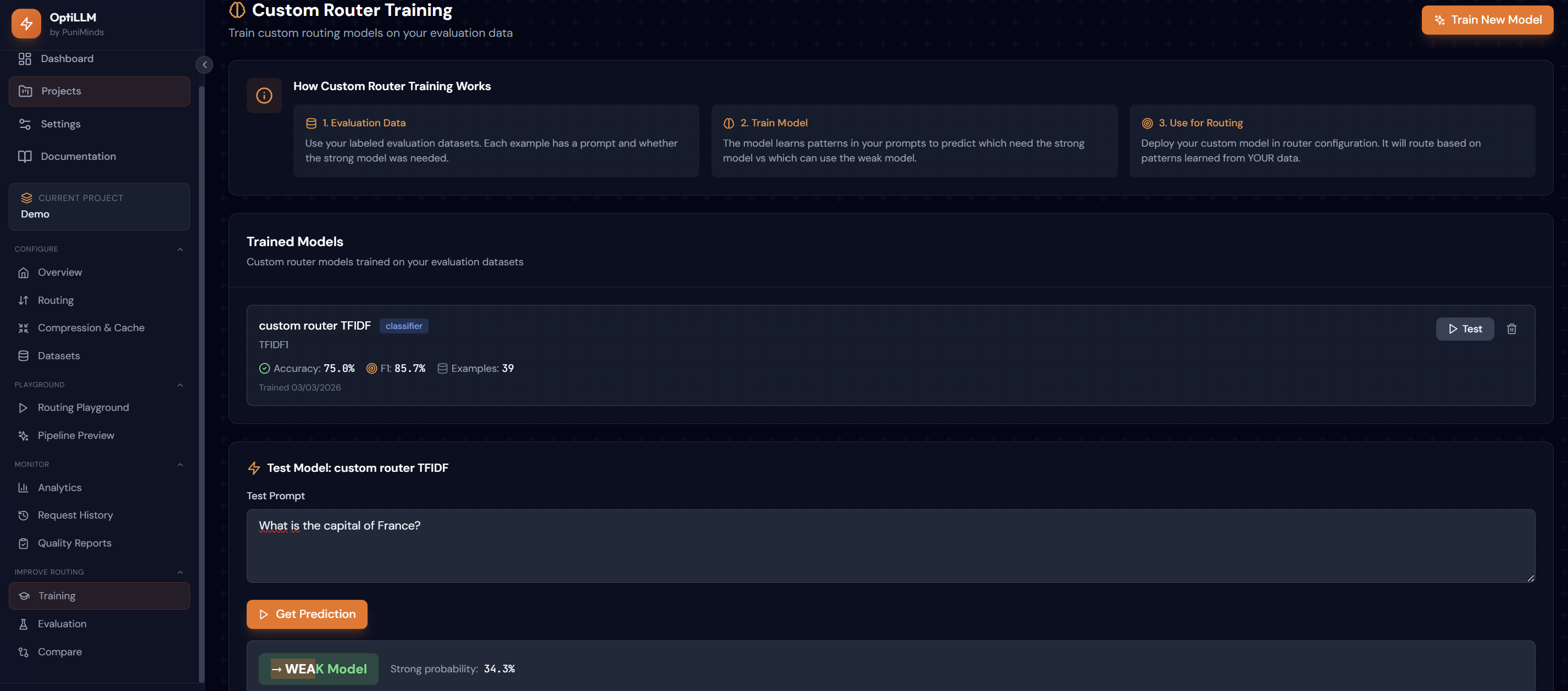

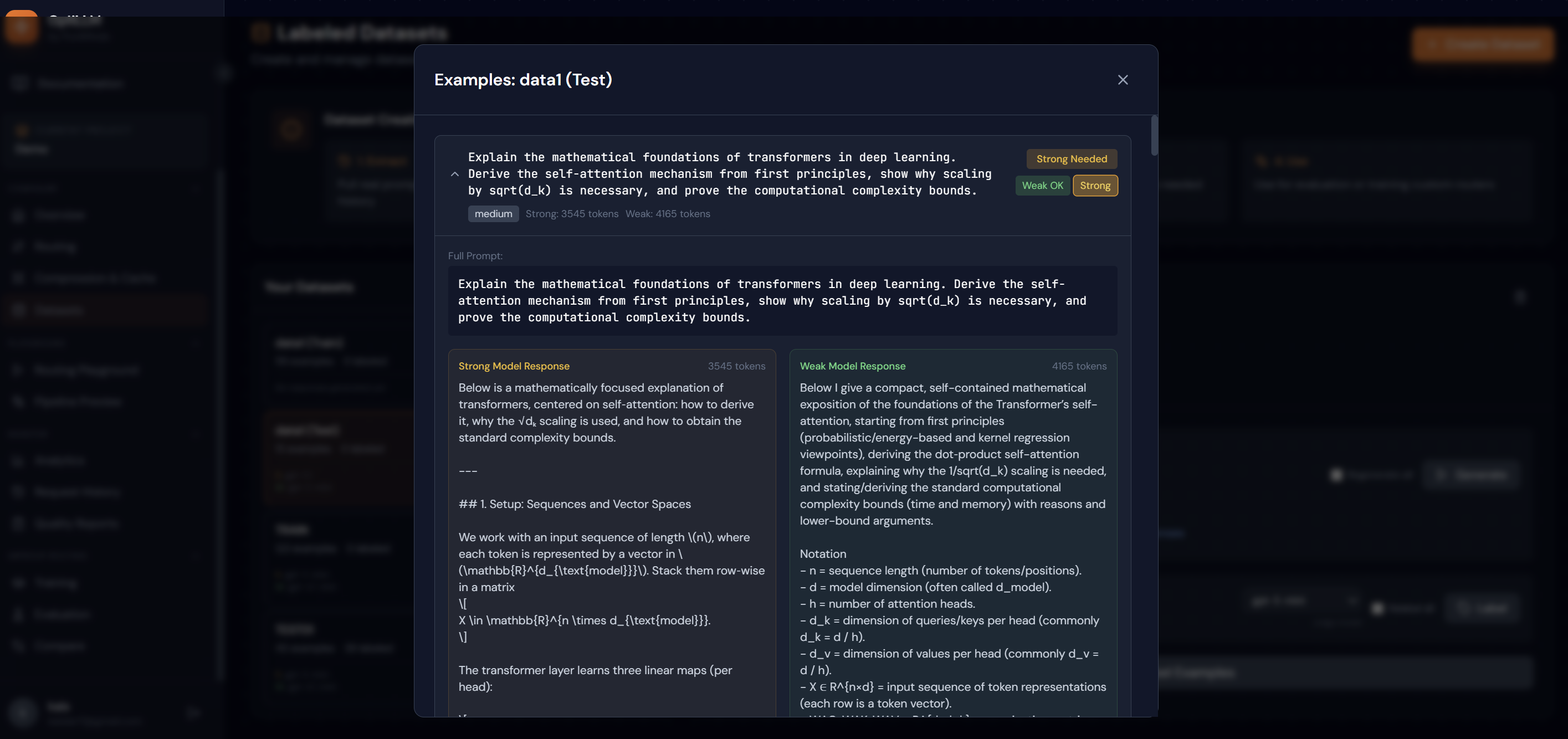

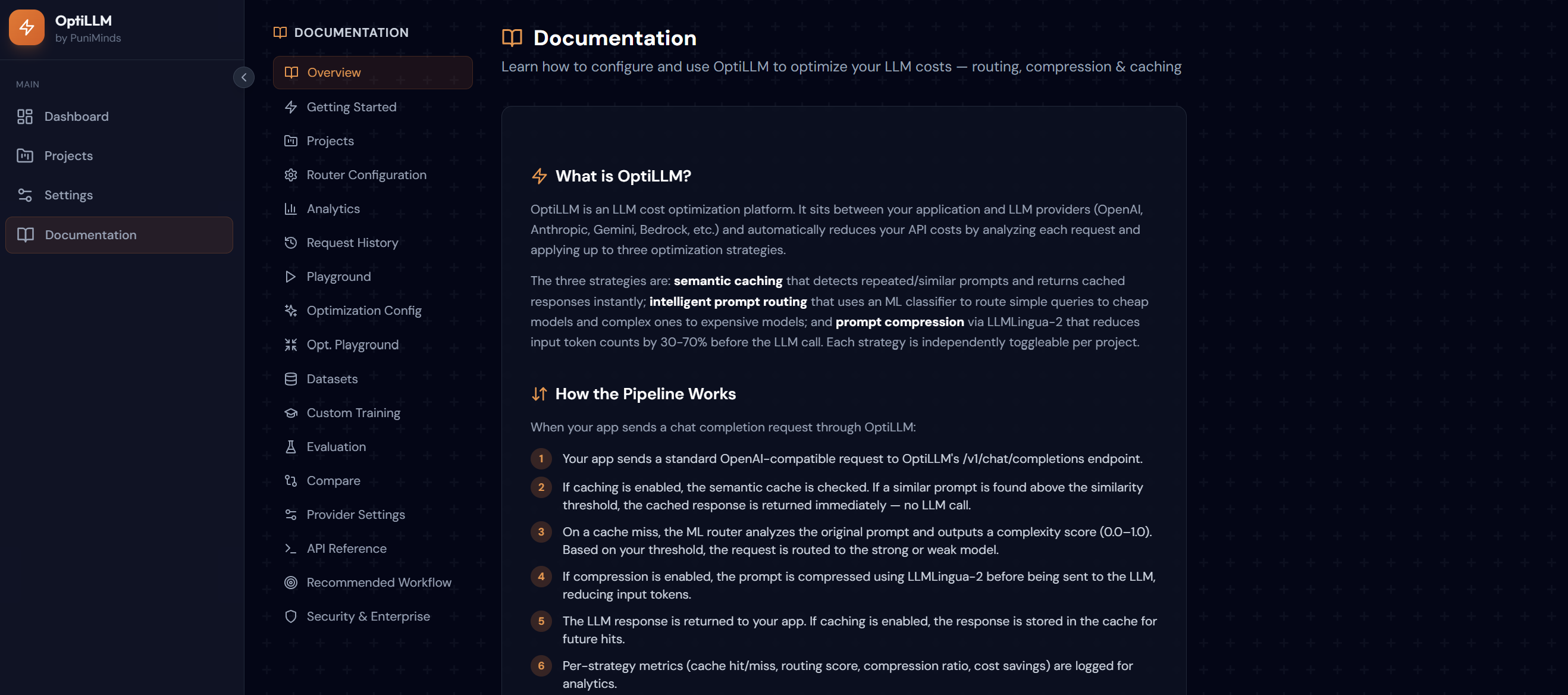

OptiLLM automatically reduces LLM API costs by up to 50%+ without sacrificing quality. It routes each prompt to the cheapest capable model using ML classifiers, compresses tokens with LLMLingua-2, and caches semantically similar queries with FAISS vector search. Drop-in OpenAI-compatible proxy — no code changes needed. Includes evaluation tools, analytics dashboards, and custom router training to continuously optimize your cost-quality tradeoff.

“what does not kill me makes me stronger”

Reviews (0)

No reviews yet

Be the first to predict the death of this product!