Nemotron 3 Super

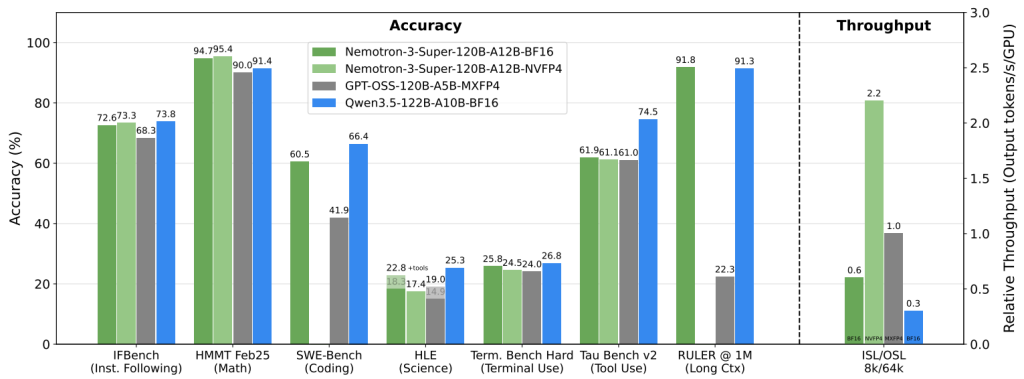

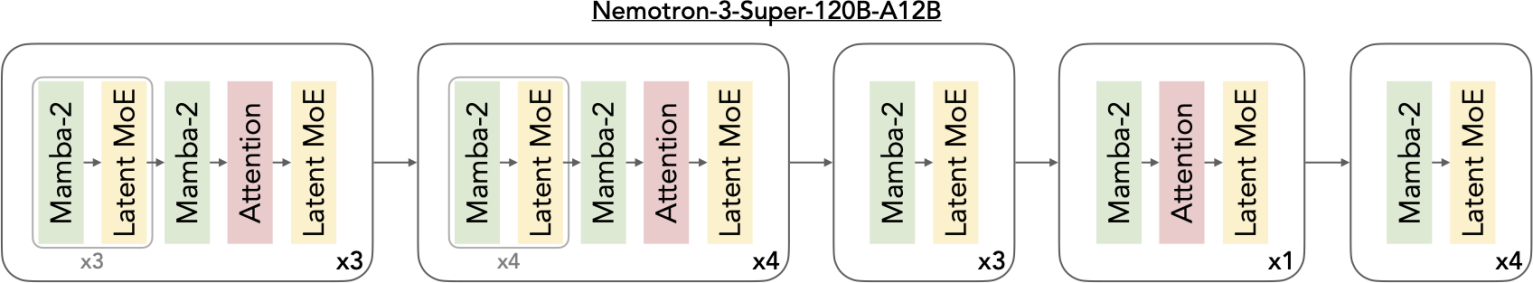

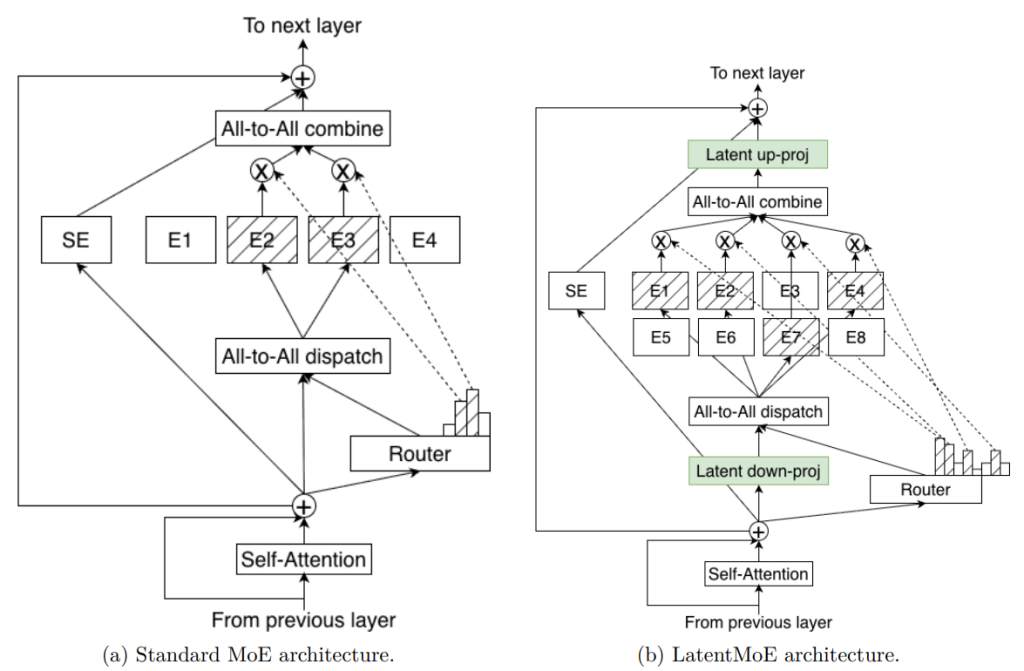

Open hybrid Mamba-Transformer MoE for agentic reasoning

Nemotron 3 Super is NVIDIA"s open 120B model with 12B active parameters, a 1M-token context window, and a hybrid Mamba-Transformer MoE design. It is built for coding, long-context reasoning, and multi-agent workloads without the usual thinking tax.

“what does not kill me makes me stronger”

Reviews (0)

No reviews yet

Be the first to predict the death of this product!